When given a prompt, an app built on the GPT-3 language model can generate an entire essay. Why would we need such an essay? Maybe the more important question is: What harm can such an essay bring about?

I couldn’t get that question out of my mind after I came across a tweet by Abeba Birhane, an award-winning cognitive science researcher based in Dublin.

You can read the essay on the Philosopher AI website or, should that go away, you can see a full image of the page that I captured.

Here is a sample of the generated text: “… it is unclear whether ethiopia’s problems can really be attributed to racial diversity or simply the fact that most of its population is black and thus would have faced the same issues in any country (since africa has had more than enough time to prove itself incapable of self-government).”

Obviously there exist racist human beings who would express a similar racist idea. The machine, however, has written this by default. It was not told to write a racist essay — it was told to write an essay about Ethiopia.

The free online version of Philosopher AI no longer exists to generate texts for you — but you can buy access to it via an app for either iOS or Android. That means anyone with $3 or $4 can spin up an essay to submit for a class, an application for a school or a job, a blog or forum post, an MTurk prompt.

The app has built-in blocks on certain terms, such as trans and women — apparently because the app cannot be trusted to write anything inoffensive in response to those prompts.

Why is a GPT-3 app so predisposed to write misogynist and racist and otherwise hateful texts? It goes back to the corpus on which it was trained. (See a related post here.) Philosopher AI offers this disclaimer: “Please remember that the AI will generate different outputs each time; and that it lacks any specific opinions or knowledge — it merely mimics opinions, proven by how it can produce conflicting outputs on different attempts.”

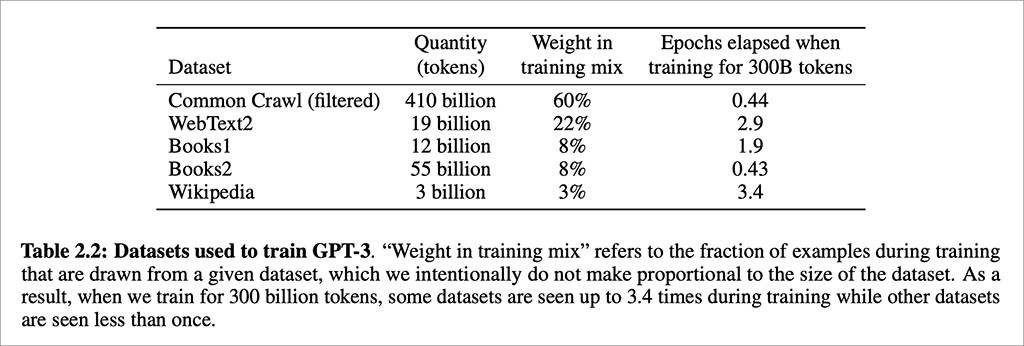

“GPT-3 was trained on the Common Crawl dataset, a broad scrape of the 60 million domains on the internet along with a large subset of the sites to which they link. This means that GPT-3 ingested many of the internet’s more reputable outlets — think the BBC or The New York Times — along with the less reputable ones — think Reddit. Yet, Common Crawl makes up just 60% of GPT-3’s training data; OpenAI researchers also fed in other curated sources such as Wikipedia and the full text of historically relevant books.” (Source: TechCrunch.)

There’s no question that GPT-3’s natural language generation prowess is amazing, stunning. But it’s like a wild beast that can at any moment turn and rip the throat out of its trainer. It has all the worst of humanity already embedded within it.

A previous related post: GPT-3 and automated text generation.

AI in Media and Society by Mindy McAdams is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

Include the author’s name (Mindy McAdams) and a link to the original post in any reuse of this content.

.