I guess it should be no surprise that people want to talk about sentient machines when the term artificial intelligence has become more common than bread and butter. I was hoping this July 2022 article in The Wall Street Journal would go further than it does to assert that there are no grounds at all for talking about sentience in today’s AI, but I was disappointed. The two authors at least did not try to “both sides” the spurious claims.

First, they state that there are a lot of exaggerated claims from companies selling so-called AI products and “solutions.” Second, they touch on the danger this holds for policy decisions — when our elected officials, lawyers, judges, etc., don’t have a clear idea of how AI systems work, they are bound to make poor laws and poor rulings. AI ethicists warn that the hype is “distorting policy makers’ views of the power and fallibility” of AI systems. The reporters quote Oren Etzioni, CEO of the nonprofit Allen Institute for Artificial Intelligence, as saying policy makers are “well-intentioned and ask good questions, but they’re not super well-informed.”

“The belief that AI is becoming — or could ever become — conscious remains on the fringes in the broader scientific community, researchers say.”

—Hao and Kruppa, in “Tech Giants Pour Billions into AI, but Hype Doesn’t Always Match Reality”

The WSJ article also covers the claims of a (now former) Google engineer who claimed the LaMDA chatbot is sentient. On July 22, The Verge was among several news organizations reporting that the engineer has been fired. That article links to a YouTube video that explains “how LaMDA works and how it could produce the responses that convinced [the engineer] without actually being sentient.”

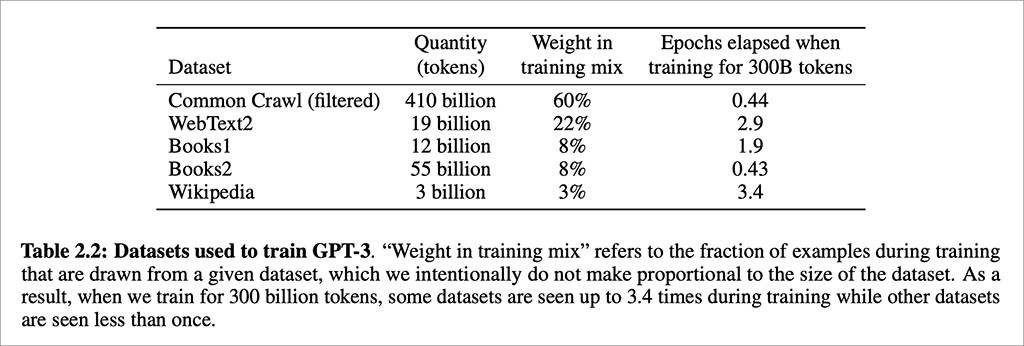

I was dismayed that the media gave so much attention to the engineer’s claims — which he never should have made in the first place, being an engineer. If you take some time to learn about how chatbots are created (or voice assistants — my undergrad college students are decidedly unimpressed with Siri and Alexa), you’ll understand that they cannot possibly have sentience. These conversational systems are prediction machines — they predict “the likelihood of a token (character, word or string) given either its preceding context or … its surrounding context” (source: Bender et al., 2021). The results can be astoundingly good, or hilariously awful. Either way, the process that generates the responses is the result of computational predictions and not the product of a sentient being.

The same is true of the output from DALL-E (and the newer DALL-E 2), which creates an image based on a text description. The less you know about today’s algorithms and powerful AI hardware, the more likely you are to wonder whether there’s a humanlike intelligence behind the system that can produce these graphics. The output is extraordinary and literally was not even possible just a few years ago. What makes it possible today are the combination of massively parallel computational structures and the algorithms designed by humans to enable really, really good guesses (predictions) at what images would best match the descriptive text.

When I say we shouldn’t talk about sentience, I am being a bit coy. I do think we should be talking about what constitutes intelligence in humans and animals, how we know an entity is conscious, and what it means to think, to feel, to perceive the world. I don’t think we should be looking for sentience in our computers — not today and not for a long, long time to come. It distracts us from what today’s AI systems are actually doing, which is making guesses that then affect real sentient humans’ real lives.

.

AI in Media and Society by Mindy McAdams is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

Include the author’s name (Mindy McAdams) and a link to the original post in any reuse of this content.

.