Sometimes I come across a video on YouTube that’s almost too simple — and that’s exactly what makes it great. Andy Kim, a junior at the elite prep school Deerfield Academy in Massachusetts, gave a local TED Talk about sentiment analysis, and I think it’s really perfect for anyone who’s spent a little time on understanding image recognition, but who has not yet studied much about natural language processing.

Your first thought might be that detecting the sentiment of a tweet, a movie review, or a response to customer service is just a matter of word definitions. Love is a positive word; hate is a negative word.

But as Melanie Mitchell wrote in Artificial Intelligence: A Guide for Thinking Humans (2019): “Looking at single words or short sequences in isolation is generally not sufficient to glean the overall sentiment; it’s necessary to capture the semantics of words in the context of the whole sentence” (p. 183; my emphasis).

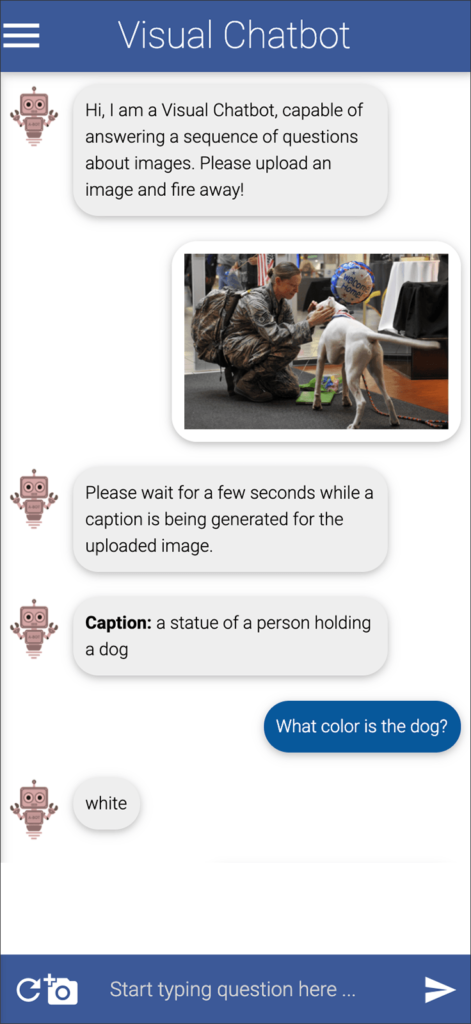

Kim, in his TED Talk, does a good job of explaining how words are represented as vectors, and how this enables complex associations with similar or related terms. He doesn’t use a diagram of three-dimensional space (which I find helpful for conceptualizing this in my own mind); instead he refers to “an n dimensional space,” which I think my journalism students might not instantly visualize.

“These word vectors can span from 25 up to a thousand components. Now, conveniently, as these vectors are still simply a list of numbers, they can be plotted on an n dimensional space …”

—Andy Kim

In computer programming, a vector is a list of values, which you can think of as points or coordinates. In a two-dimensional space, you might have x and y, with the value of x representing the point’s position on a horizontal line, and the value of y representing the point’s position on a vertical line. Add a third dimension, and you have a third coordinate, z.

To simulate more dimensions, we add even more values to the list. A single word will have a list of many values, and those values signify its relations to other words in the collection of all words in the system.

At about the middle of his talk, Kim makes it perfectly clear why so many dimensions are needed to represent relationships among terms that have multiple meanings.

Kim goes on to talk about the labeled data for training a system to detect, or recognize, sentiment in text. He used a freely available dataset from Kaggle, probably the Sentiment140 dataset with 1.6 million tweets. (Another widely used dataset for sentiment analysis training is the IMDB Dataset of 50K Movie Reviews.) Kim also demonstrates cleaning the Twitter data so that usernames, hashtags and stop words are eliminated.

Kim used the GloVe algorithm to construct vectors for the words in his dataset, but he skips over the details of the training and just tells us that he wasn’t very successful; his model only reached a 60 percent accuracy level. He closes by summarizing some of the uses of sentiment analysis.

AI in Media and Society by Mindy McAdams is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

Include the author’s name (Mindy McAdams) and a link to the original post in any reuse of this content.

.